What AI Misses Matters

More than What it Sees

By Roger Irwin

Many people talk about whether AI is good or bad, but only a select few ask what it overlooks entirely.

This post was written by Roger Irwin.

In this post

The Missed Details

In 2005, a young man from Central Los Angeles was accepted into his dream Ivy League school. He wrote in his application about wanting to escape the gang culture in his neighborhood, but when the admissions team looked up his Myspace profile and saw gang symbols and foul language, they rescinded the offer.

To the admissions officers, the images confirmed their suspicions. But as internet scholar Danah Boyd later learned, those signals may have been a form of self-protection. In his neighborhood, not signaling certain affiliations could be dangerous. Sociologist Paul Willis has observed that when young people try to change their socioeconomic standing, they risk alienating their home community. What looked like a red flag to the school might have been a survival strategy.

Now imagine that the admission decision was not made by a university employee but by a computer vision model trained to detect “gang-related” imagery. The model would make its judgment without knowing anything about neighborhood politics, personal safety, or mixed social signals. It could deliver its conclusion instantly and confidently, yet still be deeply wrong.

This is where the conversation about AI needs to go. Instead of only asking if AI is fair or biased, we should be asking what it misses entirely.

Lessons from Blind Spots

Computer vision researcher Ali Borji, who has spent years studying where AI systems fail, has warned that the field routinely hides or reframes its mistake; what he calls “negative results.” Studies that reveal model errors often go unpublished, and successes are sometimes announced without the full context of what was tried and did not work.

Kate Crawford, a leading scholar of the social and political implications of AI, and artist-researcher Trevor Paglen, known for examining how technology shapes perception, argue that AI systems “shape the world in their own image” because they are built on training datasets steeped in cultural assumptions. The parts of the world those datasets overlook, such as uncommon life experiences, underrepresented communities, or unique communication styles, often vanish from the model’s “view” entirely.

In admissions, that might mean an algorithm does not recognize certain forms of leadership or resilience because it was never trained to value them. What the system never learned to see will never appear in its rankings.

Reading Between the Algorithms

Colleges have already begun using AI in admissions, not to replace people, but to help predict which students will enroll, decide how to award scholarships, or assist with applicant review. At one large public university, researchers used machine learning and genetic algorithms to fine-tune scholarship offers. The results looked impressive on paper; enrollment rose by more than 23 percent, but the system also gave smaller awards to students already likely to enroll, which meant less aid for those most intent on getting the education (Aulck et al., 2020).

AI can also draw surprising conclusions from everyday information. In 2019, a team analyzing over 280,000 college essays was able to predict a student’s race and socioeconomic background with up to 80 percent accuracy (Alvero et al., 2019). Another project used text analysis alongside Google Cloud Vision to classify social media posts into Big Five personality traits, finding patterns in the background, objects, and even embedded text in images (Biswas et al., 2022).

The ability to judge “fit” from digital traces is already here. But so is the risk of judging badly. This is where the admissions metaphor becomes useful for all of us. Imagine you are an admissions officer with an AI tool on your desk. Would you trust the system’s conclusions? Could you spot the patterns it consistently misses? Now switch roles: imagine you are reviewing how another admissions officer is using AI to make their decisions. How would you assess their judgment? These same questions apply in decisions of all types: hiring, lending, healthcare, and countless other areas where AI is gaining influence.

Conclusion

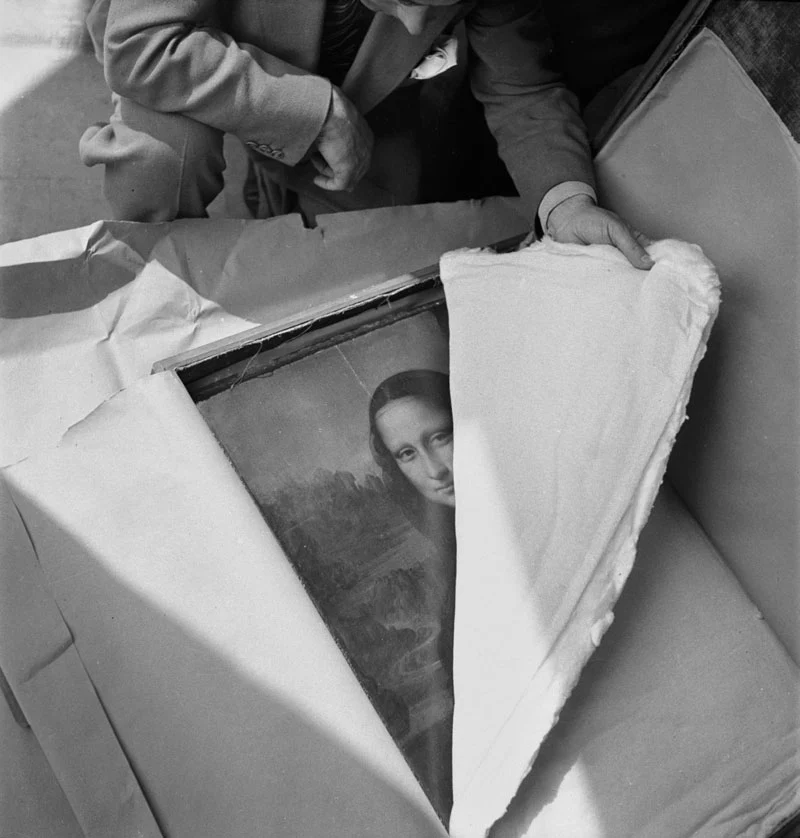

It is tempting to think of AI as a mirror reflecting the world back to us. It is more like a filter. Some details pass through, others get blurred, and some vanish entirely. The filter is shaped by what the model has seen, what its creators chose to include, and the priorities they set.

If we focus only on whether the picture looks flattering or unflattering, we miss the deeper question: what is missing from the frame?

The young man from Central Los Angeles never got the chance to explain the context of his Myspace photos. In the AI age, more of us will find ourselves in similar positions, judged by systems that have never met us and cannot see the full story.

Whatever your feelings about AI– hope, fear, or a mix of both– one skill is essential. We need to learn how to look for what the system misses. This suggests that AI is best thought of as a collaborator rather than a replacement worker. That is the starting point for making decisions in a technological landscape where gaps in the picture can be just as important as what is visible.

References

- Alvero, A. J., et al. (2019). AI and Holistic Review: Informing Human Reading in College Admissions.

- Aulck, L., et al. (2020). Increasing Enrollment by Optimizing Scholarship Allocations Using Machine Learning and Genetic Algorithms. International Educational Data Mining Society.

- Biswas, K., et al. (2022). Fuzzy and Genetic Algorithm Based Approach for Classification of Personality Traits Oriented Social Media Images. Knowledge-Based Systems.

- Borji, A. (2018). Negative Results in Computer Vision: A Perspective. Image and Vision Computing.

- Boyd, D. (2014). It’s Complicated: The Social Lives of Networked Teens. Yale University Press.

- Crawford, K., & Paglen, T. (2019). Excavating AI: The Politics of Images in Machine Learning Training Sets.

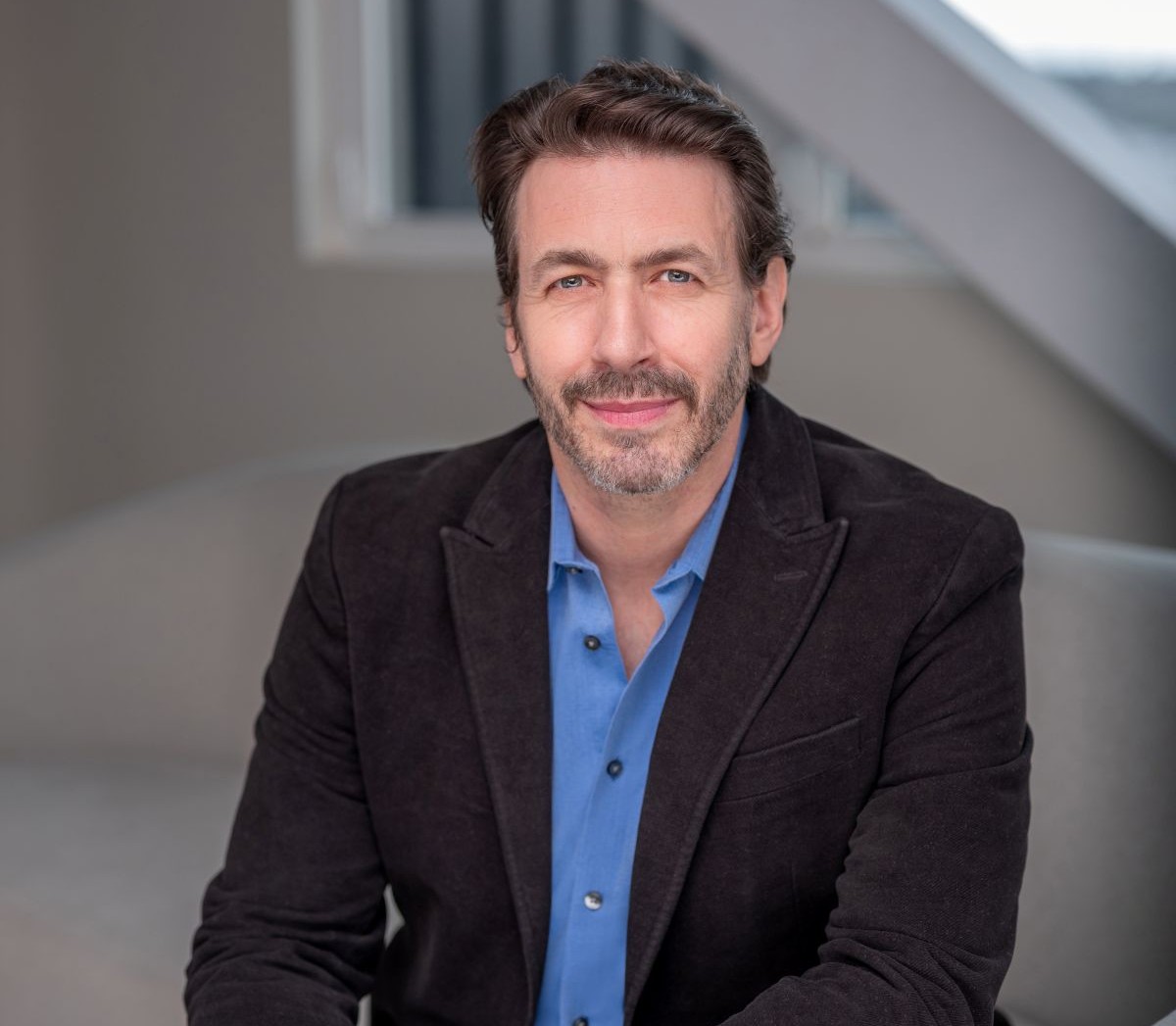

About the author

Dr. Robert Biswas-Diener

Dr. Robert Biswas-Diener is passionate about leaving the research laboratory and working in the field. His studies have taken him to such far-flung places as Greenland, India, Kenya, and Israel. He is a leading authority on strengths, culture, courage, and happiness and is known for his pioneering work in the application of positive psychology to coaching.

Robert has authored more than 75 peer-reviewed academic articles and chapters, four of which are “citation classics” (cited more than 1,000 times each). Dr. Biswas-Diener has authored nine books, including the 2007 PROSE Award winner, Happiness, the New York Times Best Seller, The Upside of Your Dark Side, the 2023 coaching book Positive Provocation, and Radical Listening, in 2025.

Thinkers50 named Robert to be among the 50 most influential executive coaches in the world.

Robert Biswas-Diener

Get updates and exclusive resources